About Me

I’m a postdoctoral researcher working at the intersection of neuroscience, computer science and physics. Currently, I’m a postdoctoral fellow at the Department of Physics and Kempner Institute for the Study of Natural and Artificial Intelligence at Harvard University, and the Center for Computational Neuroscience at the Flatiron Institute, where I work with Professor SueYeon Chung. I did my Ph.D. in theoretical computer science with Boaz Barak in the Theory of Computation Group at Harvard University. You can learn more about my research here.

Outside of work, I’m an avid baseball fan and enjoy playing piano, cooking, reading, playing Go, and listening to classical music.

Selected Recent Publications

Geometry Linked to Untangling Efficiency Reveals Structure and Computation in Neural Populations

Chi-Ning Chou, Royoung Kim, Luke Arend, Yao-Yuan Yang, Brett Mensh, Won Mok Shim, Matthew Perich, SueYeon Chung.

Under review.

[bioRxiv] [abstract ] [bibtex ] [demo page]

@article {Chou2024.02.26.582157,

author = {Chou, Chi-Ning and Kim, Royoung and Arend, Luke and Yang, Yao-Yuan and Mensh, Brett D and Shim, Won Mok and Perich, Matthew G and Chung, SueYeon},

title = {Geometry Linked to Untangling Efficiency Reveals Structure and Computation in Neural Populations},

elocation-id = {2024.02.26.582157},

year = {2024},

doi = {10.1101/2024.02.26.582157},

publisher = {Cold Spring Harbor Laboratory},

journal = {bioRxiv}

}

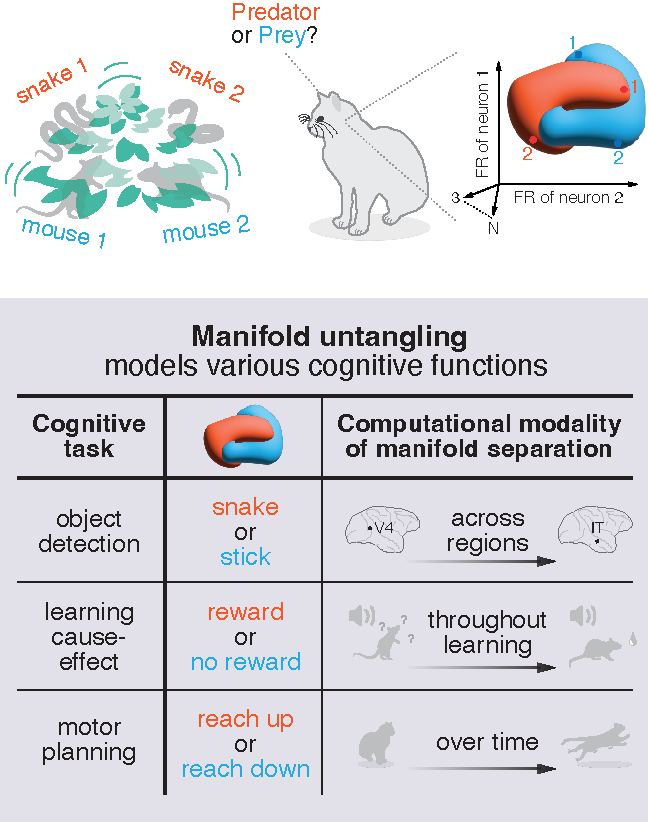

From an eagle spotting a fish in shimmering water to a scientist extracting patterns from noisy data, many cognitive tasks require untangling overlapping signals. Neural circuits achieve this by transforming complex sensory inputs into distinct, separable representations that guide behavior. Data-visualization techniques convey the geometry of these transformations, and decoding approaches quantify performance efficiency. However, we lack a framework for linking these two key aspects. Here we address this gap by introducing a data-driven analysis framework, which we call Geometry Linked to Untangling Efficiency (GLUE) with manifold capacity theory, that links changes in the geometrical properties of neural activity patterns to representational untangling at the computational level. We applied GLUE to over seven neuroscience datasets, spanning multiple organisms, tasks, and recording techniques, and found that task-relevant representations untangle in many domains, including along the cortical hierarchy, through learning, and over the course of intrinsic neural dynamics. Furthermore, GLUE can characterize the underlying geometric mechanisms of representational untangling, and explain how it facilitates efficient and robust computation. Beyond neuroscience, GLUE provides a powerful framework for quantifying information organization in data-intensive fields such as structural genomics and interpretable AI, where analyzing high-dimensional representations remains a fundamental challenge.

Diagnosing Generalization Failures from Representational Geometry Markers

Chi-Ning Chou, Artem Kirsanov, Yao-Yuan Yang, SueYeon Chung.

International Conference on Learning Representations (ICLR 2026).

Advances in Neural Information Processing Systems (NeurIPS UniReps Workshop 2025).

[arxiv] [conference version] [workshop version] [abstract ] [bibtex ] [demo page]

@inproceedings{

chou2026diagnosing,

title={Diagnosing Generalization Failures from Representational Geometry Markers},

author={Chi-Ning Chou and Artem Kirsanov and Yao-Yuan Yang and SueYeon Chung},

booktitle={The Fourteenth International Conference on Learning Representations},

year={2026},

}

Generalization—the ability to perform well beyond the training context—is a hallmark of biological and artificial intelligence, yet anticipating unseen failures remains a central challenge. Conventional approaches often take a "bottom-up" mechanistic route by reverse-engineering interpretable features or circuits to build explanatory models. While insightful, these methods often struggle to provide the high-level, predictive signals for anticipating failure in real-world deployment. Here, we propose using a "top-down" approach to studying generalization failures inspired by medical biomarkers: identifying system-level measurements that serve as robust indicators of a model’s future performance. Rather than mapping out detailed internal mechanisms, we systematically design and test network markers to probe structure–function links, identify prognostic indicators, and validate predictions in real-world settings. In image classification, we find that task-relevant geometric properties of in-distribution (ID) object manifolds consistently forecast poor out-of-distribution (OOD) generalization. In particular, reductions in two geometric measures—effective manifold dimensionality and utility—predict weaker OOD performance across diverse architectures, optimizers, and datasets. We apply this finding to transfer learning with ImageNet-pretrained models. We consistently find that the same geometric patterns predict OOD transfer performance more reliably than ID accuracy. This work demonstrates that representational geometry can expose hidden vulnerabilities, offering more robust guidance for model selection and AI interpretability.

Feature Learning beyond the Lazy-Rich Dichotomy: Insights from Representational Geometry

Chi-Ning Chou*, Hang Le*, Yichen Wang, SueYeon Chung.

International Conference on Machine Learning (ICML 2025).

Selected for Spotlight Presentation.

[arxiv] [conference version] [abstract ] [bibtex ] [demo page]

@inproceedings{CLWC25,

title={Feature Learning beyond the Lazy-Rich Dichotomy: Insights from Representational Geometry},

author={Chi-Ning Chou and Hang Le and Yichen Wang and SueYeon Chung},

booktitle={Forty-second International Conference on Machine Learning},

year={2025}

}

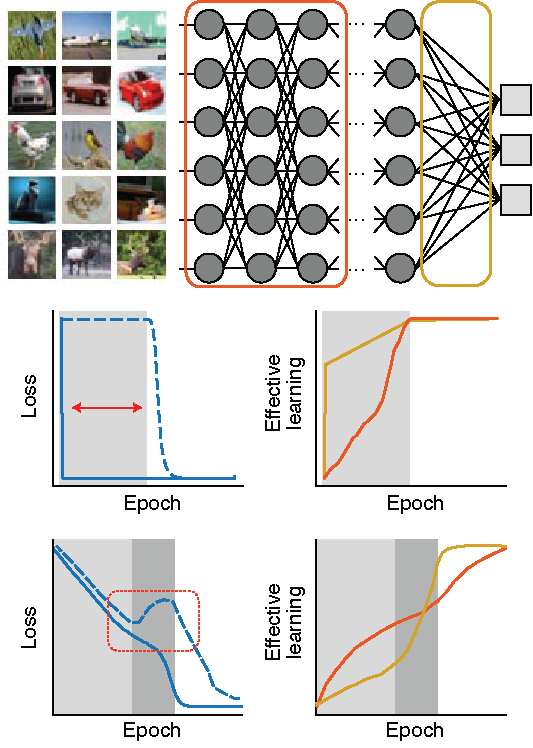

Integrating task-relevant information into neural representations is a fundamental ability of both biological and artificial intelligence systems. Recent theories have categorized learning into two regimes: the rich regime, where neural networks actively learn task-relevant features, and the lazy regime, where networks behave like random feature models. Yet this simple lazy–rich dichotomy overlooks a diverse underlying taxonomy of feature learning, shaped by differences in learning algorithms, network architectures, and data properties. To address this gap, we introduce an analysis framework to study feature learning via the geometry of neural representations. Rather than inspecting individual learned features, we characterize how task-relevant representational manifolds evolve throughout the learning process. We show, in both theoretical and empirical settings, that as networks learn features, task-relevant manifolds untangle, with changes in manifold geometry revealing distinct learning stages and strategies beyond the lazy–rich dichotomy. This framework provides novel insights into feature learning across neuroscience and machine learning, shedding light on structural inductive biases in neural circuits and the mechanisms underlying out-of-distribution generalization.

Representational learning by optimization of neural manifolds in an olfactory memory network

Bo Hu*, Nesibe Z. Temiz*, Chi-Ning Chou, Peter Rupprecht, Claire Meissner-Bernard, Benjamin Titze, SueYeon Chung, Rainer W Friedrich

Under review.

[bioRxiv] [abstract ] [bibtex ]

@article {Hu2024.11.17.623906,

author = {Hu, Bo and Temiz, Nesibe Z. and Chou, Chi-Ning and Rupprecht, Peter and Meissner-Bernard, Claire and Titze, Benjamin and Chung, SueYeon and Friedrich, Rainer W},

title = {Representational learning by optimization of neural manifolds in an olfactory memory network},

elocation-id = {2024.11.17.623906},

year = {2024},

doi = {10.1101/2024.11.17.623906},

publisher = {Cold Spring Harbor Laboratory},

journal = {bioRxiv}

}

Higher brain functions depend on experience-dependent representations of relevant information that may be organized by attractor dynamics or by geometrical modifications of continuous "neural manifolds". To explore these scenarios we analyzed odor-evoked activity in telencephalic area pDp of juvenile and adult zebrafish, the homolog of piriform cortex. No obvious signatures of attractor dynamics were detected. Rather, olfactory discrimination training selectively enhanced the separation of neural manifolds representing task-relevant odors from other representations, consistent with predictions of autoassociative network models endowed with precise synaptic balance. Analytical approaches using the framework of manifold capacity revealed multiple geometrical modifications of representational manifolds that supported the classification of task-relevant sensory information. Manifold capacity predicted odor discrimination across individuals, indicating a close link between manifold geometry and behavior. Hence, pDp and possibly related recurrent networks store information in the geometry of representational manifolds, resulting in joint sensory and semantic maps that may support distributed learning processes.Competing Interest StatementThe authors have declared no competing interest.

Current Research

How do brains and artificial neural networks support learning and generalization, and why do they sometimes fail? I develop mathematical theory and geometric analysis methods, drawing on statistical physics, theoretical computer science, and high-dimensional statistics, to answer these questions. My current work focuses on studying the underlying algorithms of learning in neural systems, predicting generalization performance, and diagnosing potential failure modes. A longer-term goal is to turn these theory-driven principles and hypotheses into causal and mechanistic understanding, informing both AI interpretability and the neural basis of cognitive disorders. I pursue these questions through three interconnected directions: (i) theory of neural representational geometry, (ii) geometric mechanisms of learning, memory, and perception, and (iii) science for deep learning and top-down interpretability. See here for more details on my research and here for all publications.

Theory of Neural Representational Geometry

Neuroscience Statistical Physics ActiveAs neural recordings scale to thousands of neurons across circuits and organisms, understanding how the population-level neural activity patterns relate to behavior and cognition has become both urgent and tractable. How do geometric properties of neural representations relate to behavior, learning, and generalization? My collaborators and I develop analytical theory grounded in statistical physics, optimization theory, and integral geometry to formalize and measure these geometric signatures. A central contribution is GLUE (Geometry Linked to Untangling Efficiency), an analytical framework linking manifold geometric measures to untangling efficiency—the robustness of linear readout under random projection—yielding tractable predictions for how representations support behavior and generalization.

[Related papers ]Geometry Linked to Untangling Efficiency Reveals Structure and Computation in Neural Populations

Chi-Ning Chou, Royoung Kim, Luke Arend, Yao-Yuan Yang, Brett Mensh, Won Mok Shim, Matthew Perich, SueYeon Chung.

Under review.

[bioRxiv] [abstract ] [bibtex ] [demo page]

@article {Chou2024.02.26.582157,

author = {Chou, Chi-Ning and Kim, Royoung and Arend, Luke and Yang, Yao-Yuan and Mensh, Brett D and Shim, Won Mok and Perich, Matthew G and Chung, SueYeon},

title = {Geometry Linked to Untangling Efficiency Reveals Structure and Computation in Neural Populations},

elocation-id = {2024.02.26.582157},

year = {2024},

doi = {10.1101/2024.02.26.582157},

publisher = {Cold Spring Harbor Laboratory},

journal = {bioRxiv}

}

From an eagle spotting a fish in shimmering water to a scientist extracting patterns from noisy data, many cognitive tasks require untangling overlapping signals. Neural circuits achieve this by transforming complex sensory inputs into distinct, separable representations that guide behavior. Data-visualization techniques convey the geometry of these transformations, and decoding approaches quantify performance efficiency. However, we lack a framework for linking these two key aspects. Here we address this gap by introducing a data-driven analysis framework, which we call Geometry Linked to Untangling Efficiency (GLUE) with manifold capacity theory, that links changes in the geometrical properties of neural activity patterns to representational untangling at the computational level. We applied GLUE to over seven neuroscience datasets, spanning multiple organisms, tasks, and recording techniques, and found that task-relevant representations untangle in many domains, including along the cortical hierarchy, through learning, and over the course of intrinsic neural dynamics. Furthermore, GLUE can characterize the underlying geometric mechanisms of representational untangling, and explain how it facilitates efficient and robust computation. Beyond neuroscience, GLUE provides a powerful framework for quantifying information organization in data-intensive fields such as structural genomics and interpretable AI, where analyzing high-dimensional representations remains a fundamental challenge.

Linear Readout of Neural Manifolds with Continuous Variables

Will Slatton*, Chi-Ning Chou*, SueYeon Chung.

In submission.

[arxiv][abstract ] [bibtex ]

@article{SCC26,

title={Linear Readout of Neural Manifolds with Continuous Variables},

author={Will Slatton and Chi-Ning Chou and SueYeon Chung},

year={2026},

eprint={2603.10956},

archivePrefix={arXiv},

primaryClass={q-bio.NC},

url={https://arxiv.org/abs/2603.10956},

}

Brains and artificial neural networks compute with continuous variables such as object position or stimulus orientation. However, the complex variability in neural responses makes it difficult to link internal representational structure to task performance. We develop a statistical-mechanical theory of regression capacity that relates linear decoding efficiency of continuous variables to geometric properties of neural manifolds. Our theory handles complex neural variability and applies to real data, revealing increasing capacity for decoding object position and size along the monkey visual stream.

Nonlinear Classification of Neural Manifolds with Contextual Information

Francesca Mignacco, Chi-Ning Chou, SueYeon Chung.

Physical Review E (2025).

Selected for Editors’ Suggestion.

[journal version] [arxiv][abstract ] [bibtex ]

@article{MCC25,

title = {Nonlinear classification of neural manifolds with contextual information},

author = {Mignacco, Francesca and Chou, Chi-Ning and Chung, SueYeon},

journal = {Phys. Rev. E},

volume = {111},

issue = {3},

pages = {035302},

numpages = {8},

year = {2025},

month = {Mar},

publisher = {American Physical Society},

doi = {10.1103/PhysRevE.111.035302},

url = {https://link.aps.org/doi/10.1103/PhysRevE.111.035302}

}

Understanding how neural systems efficiently process information through distributed representations is a fundamental challenge at the interface of neuroscience and machine learning. Recent approaches analyze the statistical and geometrical attributes of neural representations as population-level mechanistic descriptors of task implementation. In particular, manifold capacity has emerged as a promising framework linking population geometry to the separability of neural manifolds. However, this metric has been limited to linear readouts. Here, we propose a theoretical framework that overcomes this limitation by leveraging contextual input information. We derive an exact formula for the context-dependent capacity that depends on manifold geometry and context correlations, and validate it on synthetic and real data. Our framework's increased expressivity captures representation untanglement in deep networks at early stages of the layer hierarchy, previously inaccessible to analysis. As context-dependent nonlinearity is ubiquitous in neural systems, our data-driven and theoretically grounded approach promises to elucidate context-dependent computation across scales, datasets, and models.

Geometric Mechanisms of Learning, Memory, and Perception

Neuroscience ActiveSince Hubel and Wiesel’s pioneering work, single-neuron selectivity analysis has driven neuroscience for more than half a century. With the advent of simultaneous large-scale neural recordings, we can now investigate the neural underpinning of learning, memory consolidation, and behavioral adaptation at the population level. Working with experimental collaborators, we apply geometric analysis methods to large-scale neural data across circuits and organisms—from zebrafish’s olfactory memory networks to rodent’s auditory cortex to primate’s motor cortex—and recording methods-from electrophysiological probes, to calcium imaging, to fMRI. Using geometric measures to characterize representational untangling and learning strategies, we uncover both shared computational principles and task-specific mechanisms across these diverse biological systems.

[Related papers ]Geometry Linked to Untangling Efficiency Reveals Structure and Computation in Neural Populations

Chi-Ning Chou, Royoung Kim, Luke Arend, Yao-Yuan Yang, Brett Mensh, Won Mok Shim, Matthew Perich, SueYeon Chung.

Under review.

[bioRxiv] [abstract ] [bibtex ] [demo page]

@article {Chou2024.02.26.582157,

author = {Chou, Chi-Ning and Kim, Royoung and Arend, Luke and Yang, Yao-Yuan and Mensh, Brett D and Shim, Won Mok and Perich, Matthew G and Chung, SueYeon},

title = {Geometry Linked to Untangling Efficiency Reveals Structure and Computation in Neural Populations},

elocation-id = {2024.02.26.582157},

year = {2024},

doi = {10.1101/2024.02.26.582157},

publisher = {Cold Spring Harbor Laboratory},

journal = {bioRxiv}

}

From an eagle spotting a fish in shimmering water to a scientist extracting patterns from noisy data, many cognitive tasks require untangling overlapping signals. Neural circuits achieve this by transforming complex sensory inputs into distinct, separable representations that guide behavior. Data-visualization techniques convey the geometry of these transformations, and decoding approaches quantify performance efficiency. However, we lack a framework for linking these two key aspects. Here we address this gap by introducing a data-driven analysis framework, which we call Geometry Linked to Untangling Efficiency (GLUE) with manifold capacity theory, that links changes in the geometrical properties of neural activity patterns to representational untangling at the computational level. We applied GLUE to over seven neuroscience datasets, spanning multiple organisms, tasks, and recording techniques, and found that task-relevant representations untangle in many domains, including along the cortical hierarchy, through learning, and over the course of intrinsic neural dynamics. Furthermore, GLUE can characterize the underlying geometric mechanisms of representational untangling, and explain how it facilitates efficient and robust computation. Beyond neuroscience, GLUE provides a powerful framework for quantifying information organization in data-intensive fields such as structural genomics and interpretable AI, where analyzing high-dimensional representations remains a fundamental challenge.

Representational learning by optimization of neural manifolds in an olfactory memory network

Bo Hu*, Nesibe Z. Temiz*, Chi-Ning Chou, Peter Rupprecht, Claire Meissner-Bernard, Benjamin Titze, SueYeon Chung, Rainer W Friedrich

Under review.

[bioRxiv] [abstract ] [bibtex ]

@article {Hu2024.11.17.623906,

author = {Hu, Bo and Temiz, Nesibe Z. and Chou, Chi-Ning and Rupprecht, Peter and Meissner-Bernard, Claire and Titze, Benjamin and Chung, SueYeon and Friedrich, Rainer W},

title = {Representational learning by optimization of neural manifolds in an olfactory memory network},

elocation-id = {2024.11.17.623906},

year = {2024},

doi = {10.1101/2024.11.17.623906},

publisher = {Cold Spring Harbor Laboratory},

journal = {bioRxiv}

}

Higher brain functions depend on experience-dependent representations of relevant information that may be organized by attractor dynamics or by geometrical modifications of continuous "neural manifolds". To explore these scenarios we analyzed odor-evoked activity in telencephalic area pDp of juvenile and adult zebrafish, the homolog of piriform cortex. No obvious signatures of attractor dynamics were detected. Rather, olfactory discrimination training selectively enhanced the separation of neural manifolds representing task-relevant odors from other representations, consistent with predictions of autoassociative network models endowed with precise synaptic balance. Analytical approaches using the framework of manifold capacity revealed multiple geometrical modifications of representational manifolds that supported the classification of task-relevant sensory information. Manifold capacity predicted odor discrimination across individuals, indicating a close link between manifold geometry and behavior. Hence, pDp and possibly related recurrent networks store information in the geometry of representational manifolds, resulting in joint sensory and semantic maps that may support distributed learning processes.Competing Interest StatementThe authors have declared no competing interest.

Probing Biological and Artificial Neural Networks with Task-dependent Neural Manifolds

Michael Kuoch*, Chi-Ning Chou*, Nikhil Parthasarathy, Joel Dapello, James J DiCarlo, Haim Sompolinsky, SueYeon Chung.

Conference on Parsimony and Learning (CPAL 2024).

[arxiv] [conference version] [abstract ] [bibtex ]

@inproceedings{KCPDDSC24,

title={Probing Biological and Artificial Neural Networks with Task-dependent Neural Manifolds},

author={Michael Kuoch and Chi-Ning Chou and Nikhil Parthasarathy and Joel Dapello and James J. DiCarlo and Haim Sompolinsky and SueYeon Chung},

booktitle={Conference on Parsimony and Learning (Proceedings Track)},

year={2023},

url={https://openreview.net/forum?id=MxBS6aw5Gd}

}

In recent years, growth in our understanding of the computations performed in both biological and artificial neural networks has largely been driven by either low-level mechanistic studies or global normative approaches. However, concrete methodologies for bridging the gap between these levels of abstraction remain elusive. In this work, we investigate the internal mechanisms of neural networks through the lens of neural population geometry, aiming to provide understanding at an intermediate level of abstraction, as a way to bridge that gap. Utilizing manifold capacity theory (MCT) from statistical physics and manifold alignment analysis (MAA) from high-dimensional statistics, we probe the underlying organization of task-dependent manifolds in deep neural networks and neural recordings from the macaque visual cortex. Specifically, we quantitatively characterize how different learning objectives lead to differences in the organizational strategies of these models and demonstrate how these geometric analyses are connected to the decodability of task-relevant information. Furthermore, these metrics show that macaque visual cortex data are more similar to unsupervised DNNs in terms of geometrical properties such as manifold position and manifold alignment. These analyses present a strong direction for bridging mechanistic and normative theories in neural networks through neural population geometry, potentially opening up many future research avenues in both machine learning and neuroscience.

Sensory cortex plasticity supports auditory social learning

Nihaad Paraouty, Justin D. Yao, Léo Varnet, Chi-Ning Chou, SueYeon Chung, Dan H. Sanes.

Nature Communications (2023).

[journal version][abstract ] [bibtex ]

@journal{paraouty2023sensory,

title={Sensory cortex plasticity supports auditory social learning},

author={Paraouty, Nihaad and Yao, Justin D and Varnet, L{\'e}o and Chou, Chi-Ning and Chung, SueYeon and Sanes, Dan H},

journal={Nature Communications},

volume={14},

number={1},

pages={5828},

year={2023},

publisher={Nature Publishing Group UK London}

}

Social learning (SL) through experience with conspecifics can facilitate the acquisition of many behaviors. Thus, when Mongolian gerbils are exposed to a demonstrator performing an auditory discrimination task, their subsequent task acquisition is facilitated, even in the absence of visual cues. Here, we show that transient inactivation of auditory cortex (AC) during exposure caused a significant delay in task acquisition during the subsequent practice phase, suggesting that AC activity is necessary for SL. Moreover, social exposure induced an improvement in AC neuron sensitivity to auditory task cues. The magnitude of neural change during exposure correlated with task acquisition during practice. In contrast, exposure to only auditory task cues led to poorer neurometric and behavioral outcomes. Finally, social information during exposure was encoded in the AC of observer animals. Together, our results suggest that auditory SL is supported by AC neuron plasticity occurring during social exposure and prior to behavioral performance.

Science for Deep Learning and Top-Down Interpretability

Machine Learning Statistical Physics ActiveDeep neural networks have achieved remarkable empirical success, yet we lack a principled understanding of how they learn, generalize, and when they fail. This calls for both scientific understanding of the nature of learning in neural systems, and interpretability research for reliable and safe AI deployment. Most existing approaches take a bottom-up route, reverse-engineering circuits, features, or algorithms, or develop mathematical theories for toy models. We complement both with a top-down approach inspired by the success of statistical physics, using geometric analysis of representations as a task-agnostic framework that addresses both questions simultaneously. Applying this framework across vision and language models, we study feature learning strategies, explain anomalous generalization phenomena, and diagnose out-of-distribution failure modes.

[Related papers ]Two Speeds of Learning: A Representation-Readout Decomposition of Grokking and Double Descent

Chi-Ning Chou*, Oscar Uzdelewicz*, Neng-Chun Chiu, Yao-Yuan Yang, SueYeon Chung.

[arxiv] [abstract ] [bibtex ]

@misc{chou2026speedslearningrepresentationreadoutdecomposition,

title={Two Speeds of Learning: A Representation-Readout Decomposition of Grokking and Double Descent},

author={Chi-Ning Chou and Oscar Uzdelewicz and Neng-Chun Chiu and Yao-Yuan Yang and SueYeon Chung},

year={2026},

eprint={2605.27078},

archivePrefix={arXiv},

primaryClass={cs.LG},

url={https://arxiv.org/abs/2605.27078},

}

Training loss and accuracy are the standard signals used to monitor generalization during deep neural network training. Two well-documented phenomena complicate this picture: in grokking, train loss falls rapidly while test performance improves abruptly only after a long delay; in epoch-wise double descent, train loss decreases monotonically while test loss or error rises and falls. Existing accounts are often task-specific, and a task-agnostic analysis framework for diagnosing and explaining these phenomena across realistic tasks and architectures is missing. We address this challenge by analyzing two competing processes that underlie learning dynamics: representation learning in the encoder and readout calibration in the final classifier. Using tools from representational geometry, neural tangent kernels, and linear probing, we show that both processes are active throughout training, with the fluctuations of their relative speed giving rise to seemingly anomalous generalization dynamics. Applying the representation-readout decomposition to grokking across a wide range of tasks and architectures, we find that the readout is train-biased before grokking onset, and representation learning is gradual but not absent, contrary to the lazy-to-rich account. The framework further provides diagnostic signatures distinguishing spurious from genuine generalization: in a previously reported MNIST grokking example and an epoch-wise double descent example, apparent delayed or non-monotone generalization is shown to arise from representation degradation and readout misalignment induced by non-standard training recipes. Together, these results establish the representation-readout decomposition as a top-down framework for understanding learning dynamics and revealing underlying algorithms for interpretability research.

Diagnosing Generalization Failures from Representational Geometry Markers

Chi-Ning Chou, Artem Kirsanov, Yao-Yuan Yang, SueYeon Chung.

International Conference on Learning Representations (ICLR 2026).

Advances in Neural Information Processing Systems (NeurIPS UniReps Workshop 2025).

[arxiv] [conference version] [workshop version] [abstract ] [bibtex ] [demo page]

@inproceedings{

chou2026diagnosing,

title={Diagnosing Generalization Failures from Representational Geometry Markers},

author={Chi-Ning Chou and Artem Kirsanov and Yao-Yuan Yang and SueYeon Chung},

booktitle={The Fourteenth International Conference on Learning Representations},

year={2026},

}

Generalization—the ability to perform well beyond the training context—is a hallmark of biological and artificial intelligence, yet anticipating unseen failures remains a central challenge. Conventional approaches often take a "bottom-up" mechanistic route by reverse-engineering interpretable features or circuits to build explanatory models. While insightful, these methods often struggle to provide the high-level, predictive signals for anticipating failure in real-world deployment. Here, we propose using a "top-down" approach to studying generalization failures inspired by medical biomarkers: identifying system-level measurements that serve as robust indicators of a model’s future performance. Rather than mapping out detailed internal mechanisms, we systematically design and test network markers to probe structure–function links, identify prognostic indicators, and validate predictions in real-world settings. In image classification, we find that task-relevant geometric properties of in-distribution (ID) object manifolds consistently forecast poor out-of-distribution (OOD) generalization. In particular, reductions in two geometric measures—effective manifold dimensionality and utility—predict weaker OOD performance across diverse architectures, optimizers, and datasets. We apply this finding to transfer learning with ImageNet-pretrained models. We consistently find that the same geometric patterns predict OOD transfer performance more reliably than ID accuracy. This work demonstrates that representational geometry can expose hidden vulnerabilities, offering more robust guidance for model selection and AI interpretability.

Feature Learning beyond the Lazy-Rich Dichotomy: Insights from Representational Geometry

Chi-Ning Chou*, Hang Le*, Yichen Wang, SueYeon Chung.

International Conference on Machine Learning (ICML 2025).

Selected for Spotlight Presentation.

[arxiv] [conference version] [abstract ] [bibtex ] [demo page]

@inproceedings{CLWC25,

title={Feature Learning beyond the Lazy-Rich Dichotomy: Insights from Representational Geometry},

author={Chi-Ning Chou and Hang Le and Yichen Wang and SueYeon Chung},

booktitle={Forty-second International Conference on Machine Learning},

year={2025}

}

Integrating task-relevant information into neural representations is a fundamental ability of both biological and artificial intelligence systems. Recent theories have categorized learning into two regimes: the rich regime, where neural networks actively learn task-relevant features, and the lazy regime, where networks behave like random feature models. Yet this simple lazy–rich dichotomy overlooks a diverse underlying taxonomy of feature learning, shaped by differences in learning algorithms, network architectures, and data properties. To address this gap, we introduce an analysis framework to study feature learning via the geometry of neural representations. Rather than inspecting individual learned features, we characterize how task-relevant representational manifolds evolve throughout the learning process. We show, in both theoretical and empirical settings, that as networks learn features, task-relevant manifolds untangle, with changes in manifold geometry revealing distinct learning stages and strategies beyond the lazy–rich dichotomy. This framework provides novel insights into feature learning across neuroscience and machine learning, shedding light on structural inductive biases in neural circuits and the mechanisms underlying out-of-distribution generalization.

The Geometry of Prompting: Unveiling Distinct Mechanisms of Task Adaptation in Language Models

Artem Kirsanov, Chi-Ning Chou, Kyunghyun Cho, SueYeon Chung.

Annual Conference of the Nations of the Americas Chapter of the ACL (NAACL 2025).

[arxiv] [conference version] [abstract ] [bibtex ]

@inproceedings{KCCC25,

title={The Geometry of Prompting: Unveiling Distinct Mechanisms of Task Adaptation in Language Models},

author={Artem Kirsanov and Chi-Ning Chou and Kyunghyun Cho and SueYeon Chung},

booktitle={The 2025 Annual Conference of the Nations of the Americas Chapter of the ACL (NAACL 2025)},

year={2025},

}

Decoder-only language models have the ability to dynamically switch between various computational tasks based on input prompts. Despite many successful applications of prompting, there is very limited understanding of the internal mechanism behind such flexibility. In this work, we investigate how different prompting methods affect the geometry of representations in these models. Employing a framework grounded in statistical physics, we reveal that various prompting techniques, while achieving similar performance, operate through distinct representational mechanisms for task adaptation. Our analysis highlights the critical role of input distribution samples and label semantics in few-shot in-context learning. We also demonstrate evidence of synergistic and interfering interactions between different tasks on the representational level. Our work contributes to the theoretical understanding of large language models and lays the groundwork for developing more effective, representation-aware prompting strategies.

Task-Relevant Covariance from Manifold Capacity Theory Improves Robustness in Deep Networks

William Yang, Chi-Ning Chou, SueYeon Chung.

Advances in Neural Information Processing Systems (NeurIPS UniReps Workshop 2024).

[NeurIPS UniReps workshop version] [abstract ] [bibtex ]

@inproceedings{

yang2024taskrelevant,

title={Task-Relevant Covariance from Manifold Capacity Theory Improves Robustness in Deep Networks},

author={William Yang and Chi-Ning Chou and SueYeon Chung},

booktitle={UniReps: 2nd Edition of the Workshop on Unifying Representations in Neural Models},

year={2024},

}

Analysis of high-dimensional representations in neuroscience and deep learning traditionally places equal importance on all points in a representation, potentially leading to significant information loss. Recent advances in manifold capacity theory offer a principled framework for identifying the computationally relevant points on neural manifolds. In this work, we introduce the concept of task-relevant class covariance to identify directions in representation-space supporting class discriminability. We demonstrate that scaling representations along these directions markedly improves simulated accuracy under distribution shift. Building on these insights, we propose AnchorBlocks, architectural modules that use task-relevant class covariance to align representations with a task-relevant eigenspace. By appending one AnchorBlock onto ResNet18, we achieve competitive performance in a standard domain adaptation benchmark (CIFAR-10C) against much larger robustness-promoting architectures. Our findings provide insight into neural population geometry and methods to interpret/build robust deep learning systems.

Probing Biological and Artificial Neural Networks with Task-dependent Neural Manifolds

Michael Kuoch*, Chi-Ning Chou*, Nikhil Parthasarathy, Joel Dapello, James J DiCarlo, Haim Sompolinsky, SueYeon Chung.

Conference on Parsimony and Learning (CPAL 2024).

[arxiv] [conference version] [abstract ] [bibtex ]

@inproceedings{KCPDDSC24,

title={Probing Biological and Artificial Neural Networks with Task-dependent Neural Manifolds},

author={Michael Kuoch and Chi-Ning Chou and Nikhil Parthasarathy and Joel Dapello and James J. DiCarlo and Haim Sompolinsky and SueYeon Chung},

booktitle={Conference on Parsimony and Learning (Proceedings Track)},

year={2023},

url={https://openreview.net/forum?id=MxBS6aw5Gd}

}

In recent years, growth in our understanding of the computations performed in both biological and artificial neural networks has largely been driven by either low-level mechanistic studies or global normative approaches. However, concrete methodologies for bridging the gap between these levels of abstraction remain elusive. In this work, we investigate the internal mechanisms of neural networks through the lens of neural population geometry, aiming to provide understanding at an intermediate level of abstraction, as a way to bridge that gap. Utilizing manifold capacity theory (MCT) from statistical physics and manifold alignment analysis (MAA) from high-dimensional statistics, we probe the underlying organization of task-dependent manifolds in deep neural networks and neural recordings from the macaque visual cortex. Specifically, we quantitatively characterize how different learning objectives lead to differences in the organizational strategies of these models and demonstrate how these geometric analyses are connected to the decodability of task-relevant information. Furthermore, these metrics show that macaque visual cortex data are more similar to unsupervised DNNs in terms of geometrical properties such as manifold position and manifold alignment. These analyses present a strong direction for bridging mechanistic and normative theories in neural networks through neural population geometry, potentially opening up many future research avenues in both machine learning and neuroscience.

Contact

Email: